Selected work

mosaico-chat — a portable RAG engine, no admin UI required

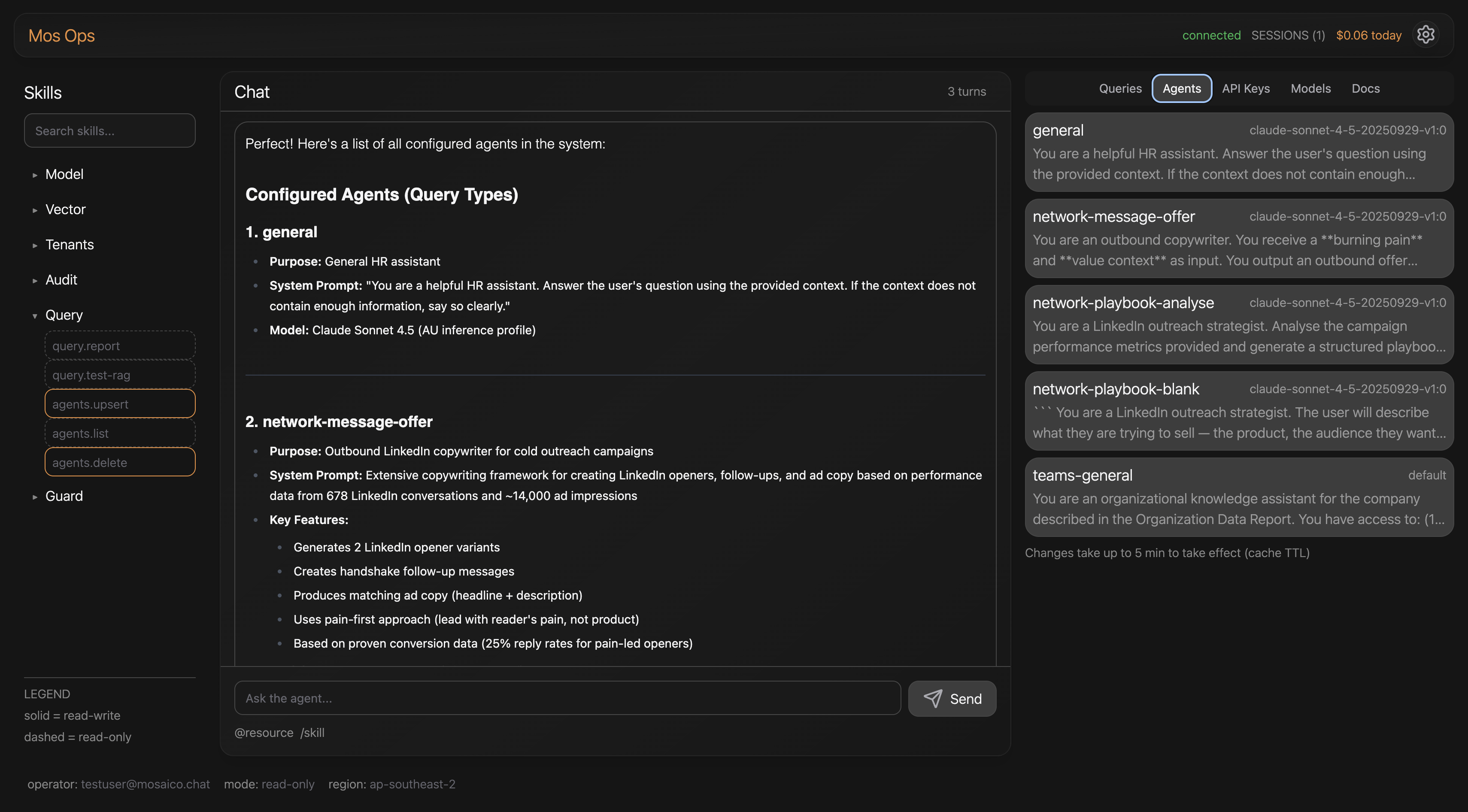

A serverless RAG system that powers AI features across two Mosaico products — managed entirely by an agent, not a dashboard, and ready to plug into any legacy SaaS.

Role

Product & architecture lead

Timeline

Mid-2025 → live · 2 iterations

Years

2025 – 2026

Status

In production · 4 agents · 2 products

The origin

Two Mosaico products — Teams and Network — both needed deep AI. Building the RAG layer twice meant doubled cost, doubled maintenance, and no path to selling the engine on its own. mosaico-chat became the shared spine: one engine, multiple agents, multiple products.

The problem

Off-the-shelf RAG was either too expensive to host or required an admin dashboard the size of a separate product. Custom Bedrock builds were out of reach for legacy SaaS teams. The gap: a portable engine that an agent could configure — no UI to maintain, no infra to run.

The hypothesis

“If an agent can administer the RAG system, the admin UI disappears. What’s left is a public API contract — and that contract is the product.”

Two iterations, one cost curve

Why v2 was only possible in mid-2026

Iteration 01 · mid-2025

Python/JS · EC2 · Tesla GPU

Cost model

$500/mo fixed

Custom infra was the only viable path: S3 Vectors didn’t exist yet, OpenSearch cost more than a Tesla-equipped EC2. Right call for the moment.

Iteration 02 · 2026 · current

Lambda · Bedrock · S3 Vectors

Cost model

~$0.04 per query · pay-per-use

S3 Vectors made fixed-cost infra obsolete. Lambda removed the always-on bill. Bedrock provided model access without managing weights. Same capability, fraction of the cost.

Three design decisions

The architectural choices that defined the product

01

Pipeline A — the agent is the admin UI

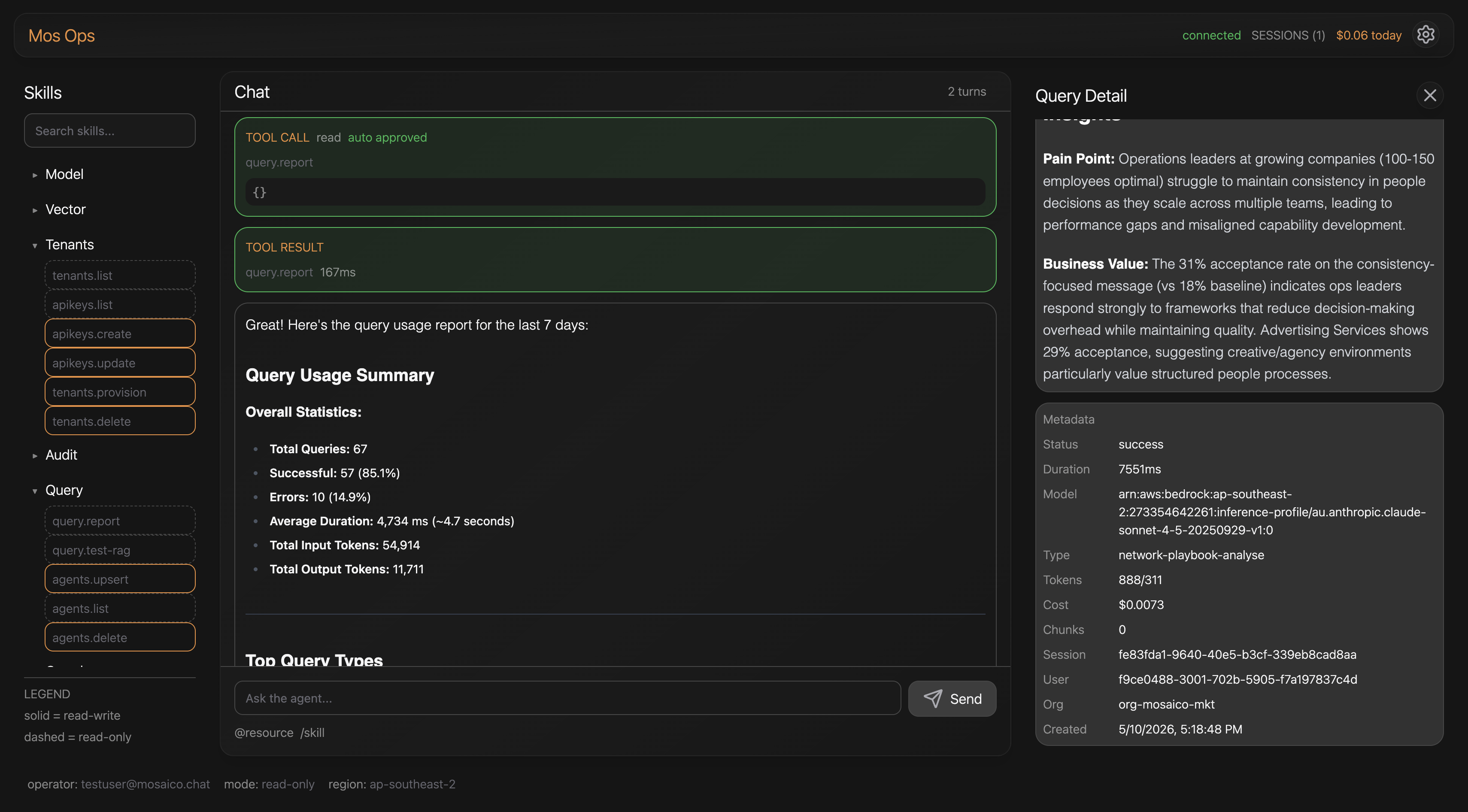

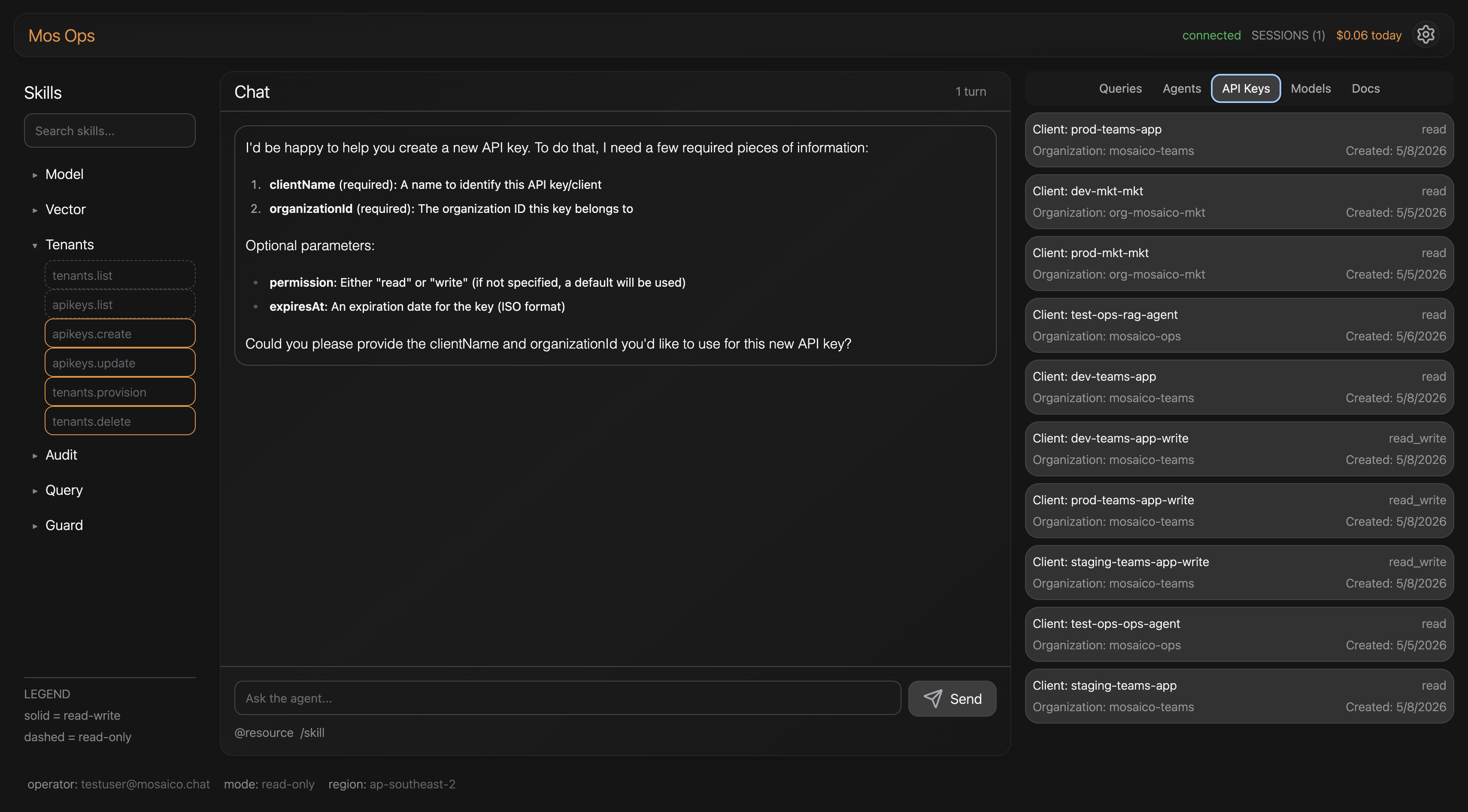

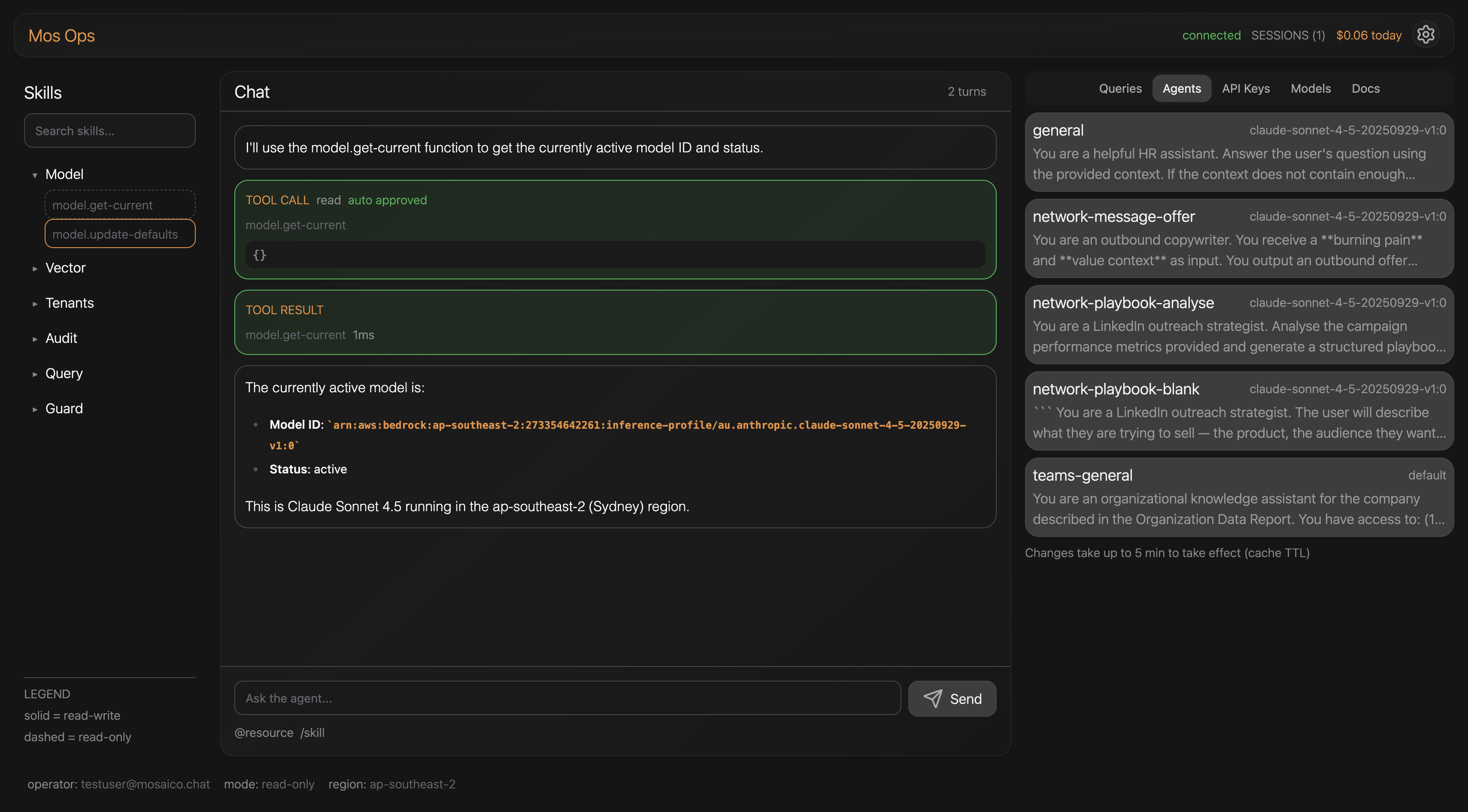

Pipeline A is an agent that manages Pipeline B's configuration — API keys, system prompts, query reports, parameters — by talking directly to Dynamo and Bedrock. No admin dashboard. No dozens of config screens. Configuration becomes a conversation.

02

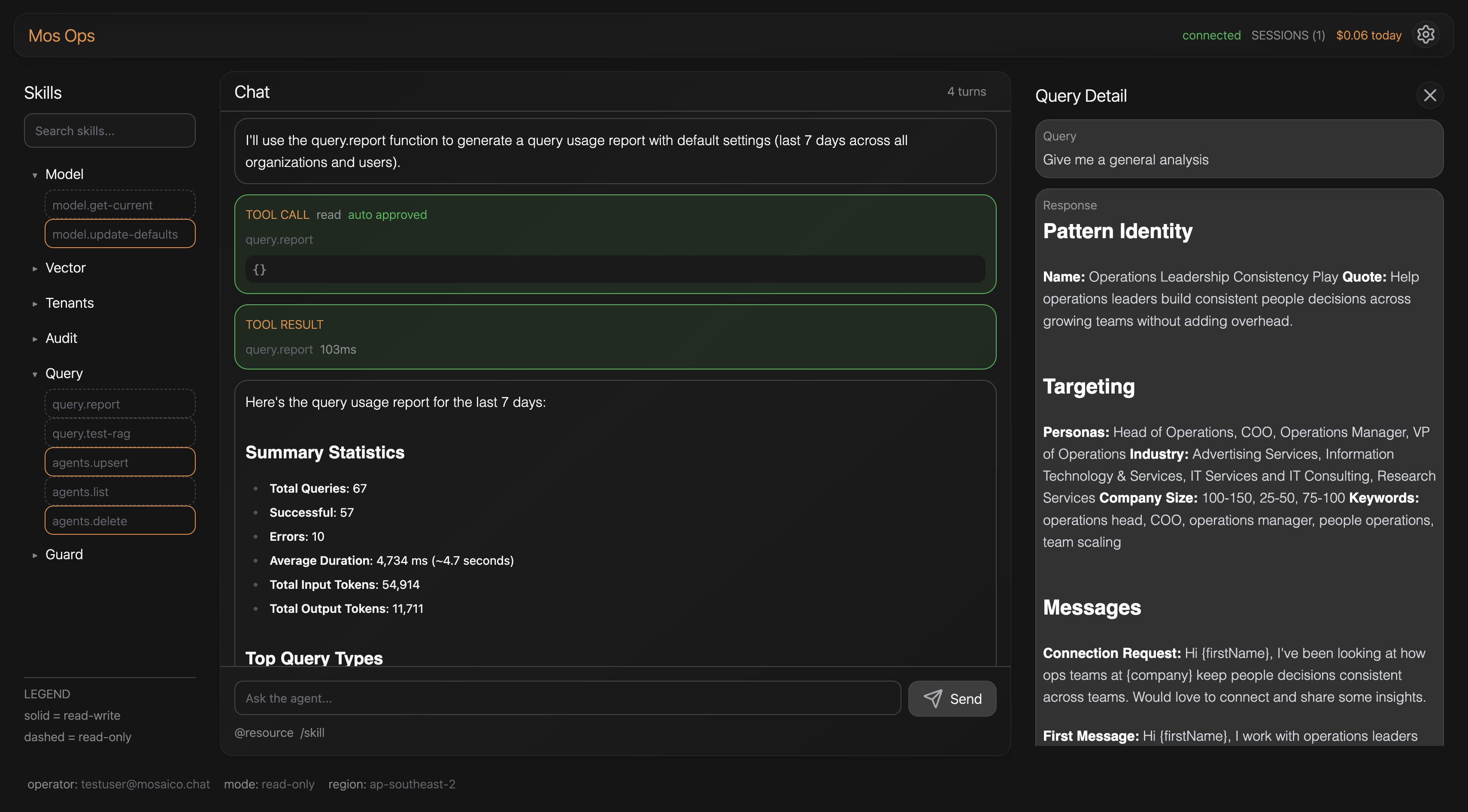

Pipeline B — public API contract, multi-agent per tenant

One contract: NL or data in, NL or data out. Multiple specialised agents per tenant — Teams runs 1, Network runs 3, each scoped to specific contexts and output shapes. The product is the contract; the engine stays out of the customer's stack. Full pipeline observability built in.

03

Serverless on Bedrock + S3 Vectors — fixed cost out, pay-per-use in

V1 ran on EC2 + Tesla GPU at $500/mo fixed because S3 Vectors didn't exist and OpenSearch was more expensive. When S3 Vectors landed in mid-2026, the whole stack moved to Lambda + Bedrock — no idle cost, no model hosting, no infra team. ~$0.04 per query at production load.

Trade-off rejected

Rejected

Per-product RAG + admin dashboard

The default path: build RAG twice (once for Teams, once for Network), each with its own admin UI, each maintained separately. mosaico-chat refused — one engine, agent-administered, public contract. That choice is also what makes it sellable as a standalone product to legacy SaaS.

Outcomes

Metric 01

$500 → $0.04

monthly fixed → per-query · v1 → v2

Metric 02

~4.6s

end-to-end query · Sonnet 4.5 / Bedrock

Metric 03

4 agents

Teams (1) + Network (3) · expanding

Metric 04

2 products

live in production · one shared engine

Qualitative outcome · how it’s used

Teams: org compliance + capability queries against live process data. Network: SDR campaign generation + post-launch performance reports. Same engine, different agent contracts.

Qualitative outcome · observability

Every query is timed end-to-end across 7 stages with hallucination risk and search score recorded — production debugging is a dashboard, not a forensic exercise.

What I learned

The right architecture is the one the cloud catalogue allows — and the catalogue moves. V1 was the right call in 2025; six months later, S3 Vectors made V1 obsolete. The lesson: build for the contract, not the infra. When the substrate shifts, only the implementation has to move.

What’s next

A stack-agnostic SDK so legacy SaaS teams can plug mosaico-chat in without building chat UIs or DB-snapshot pipelines themselves. Expand the agent library on Teams, and start onboarding the first external customer onto the engine as a standalone product.