Selected work

Mosaico Teams App — what AI-native architecture lets two people ship

An HR & ops platform where processes, positions and reports are AI-managed — built in 10 months by 1 FTE + 1 part-time, after a month-6 pivot to an AI-native stack.

Role

Product & design lead · architecture decisions

Timeline

10 months · build → pilot live

Years

2025 – 2026

Status

In pilot · selling since Feb 2026

The origin

Ten years at Jemini taught two hard lessons. Every people-process — onboarding, performance, training — was hardcoded. New module: 6 months, 10 devs. To absorb every customer’s edge case, modules became bloated and expensive to support. Reports were rigid: pre-built dashboards that rarely matched what the user actually wanted. Mosaico Teams App was built to fix all three.

The reframe

Don’t hardcode processes — let AI manage them. Natural language becomes positions, processes, capabilities, compliance. One-click install for SMBs, full customisation down to the field for enterprise. Reports stop being dashboards and start being conversations against live data via RAG.

The hypothesis

“Hardcoded software is the bottleneck. If processes, positions and reports can all be AI-managed, two people can ship what used to take ten — and the product can be both SMB-ready and enterprise-deep.”

The pivot — month 6

The moment the architecture had to change

Before · months 1–6

Traditional stack · 10% AI-assisted

Trajectory said 24 months to ship the full feature set. Mixing human and AI coding without structured agents created anti-patterns. Reliability suffered.

After · months 7–10

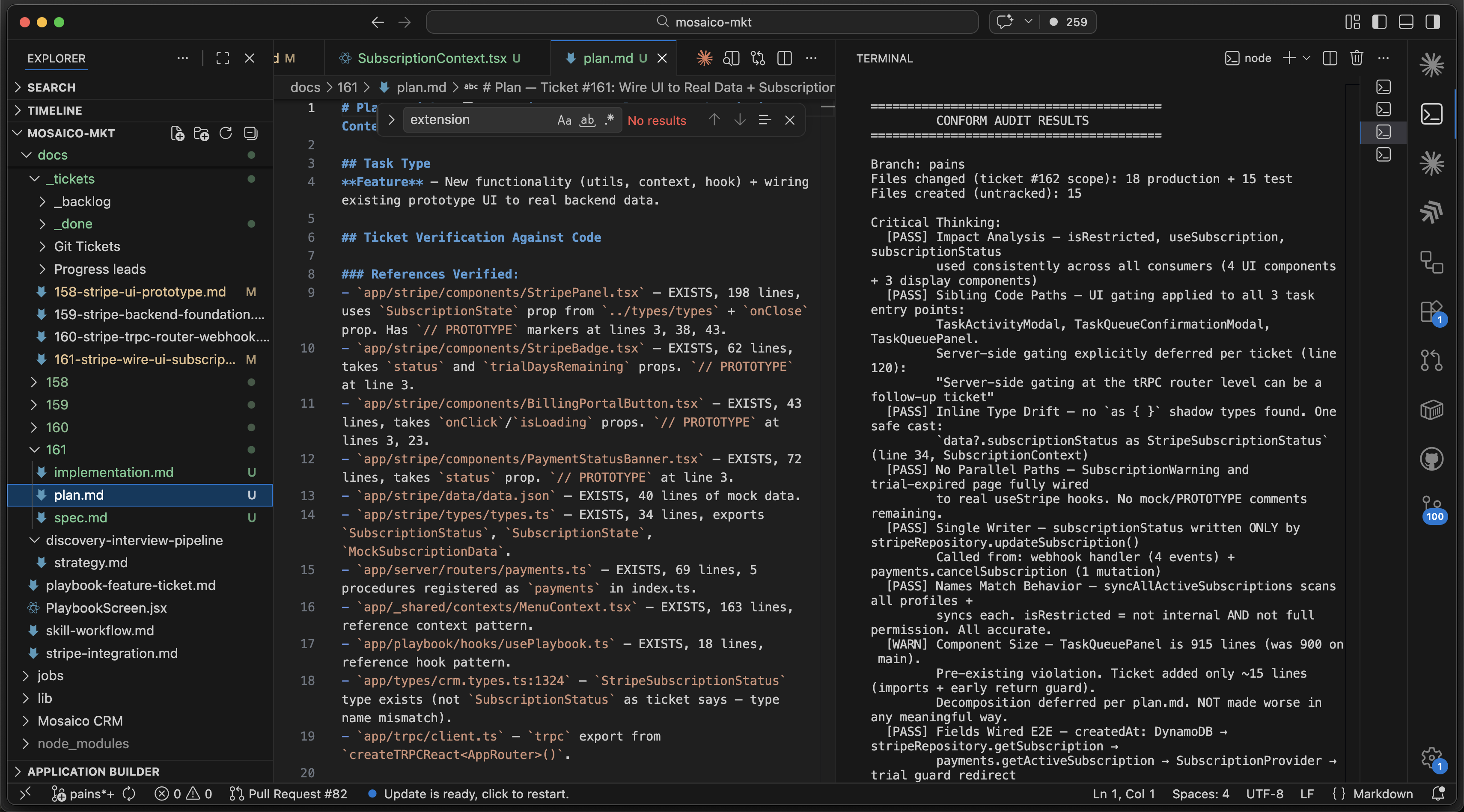

AI-native stack · 99% AI-written

Refactored architecture for AI. Removed AWS abstractions without code/CLI exposure. Adopted a stack the AI knew well. Specialised agents own design, develop, deploy. Humans review and improve agents.

Two signals at once: the feature set wouldn’t land in 12 months, and the human-AI hybrid was generating anti-patterns faster than humans could fix them.

Three design decisions

What made the product feasible

01

AI-orchestrated design → develop → deploy pipeline

Specialised agents own each stage. Humans review code and improve agents to stop error repetition — they don't write code. Architecture chosen for AI familiarity, not human preference. The result: 99% AI-written code, fewer anti-patterns, no instability from human-AI mixing.

02

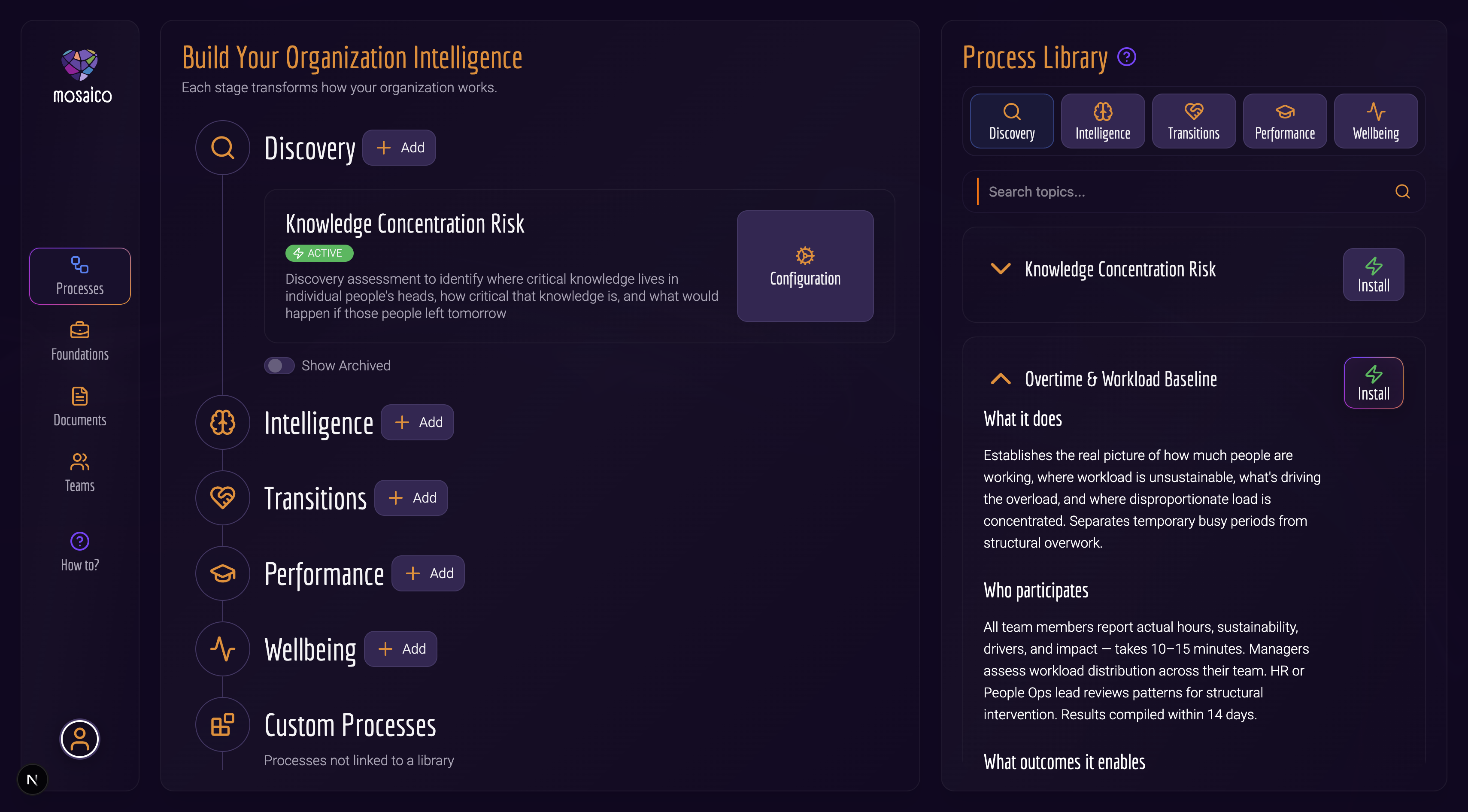

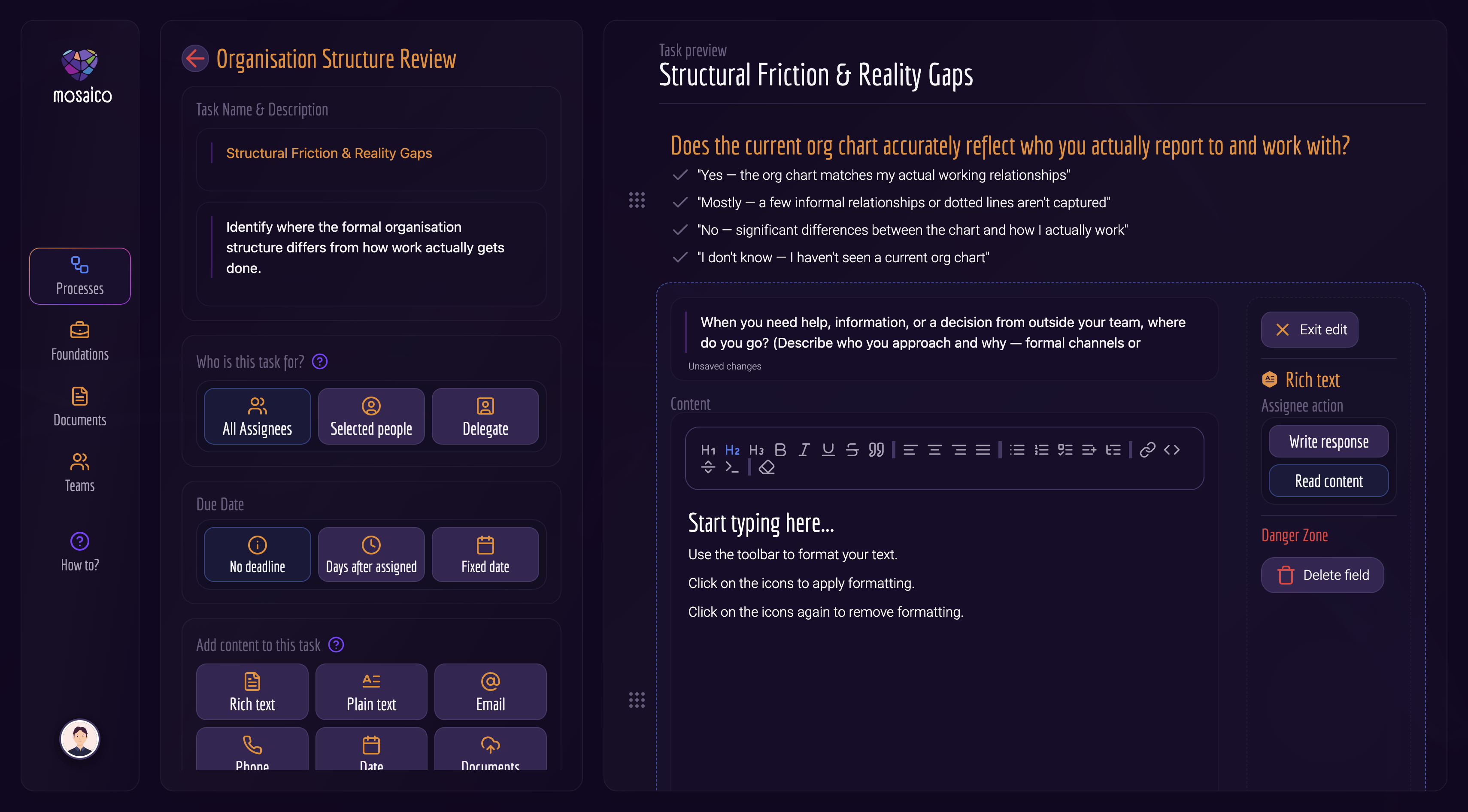

Processes & positions as AI-installable units, not hardcoded modules

Natural language becomes processes, positions, capabilities and compliance records. Pre-built libraries cover 5 industries × 3 sizes (SMB / mid / enterprise) — one-click install. Every process drills down to tasks → fields, with 10 field types. SMB-ready out of the box, enterprise-customisable to the field.

03

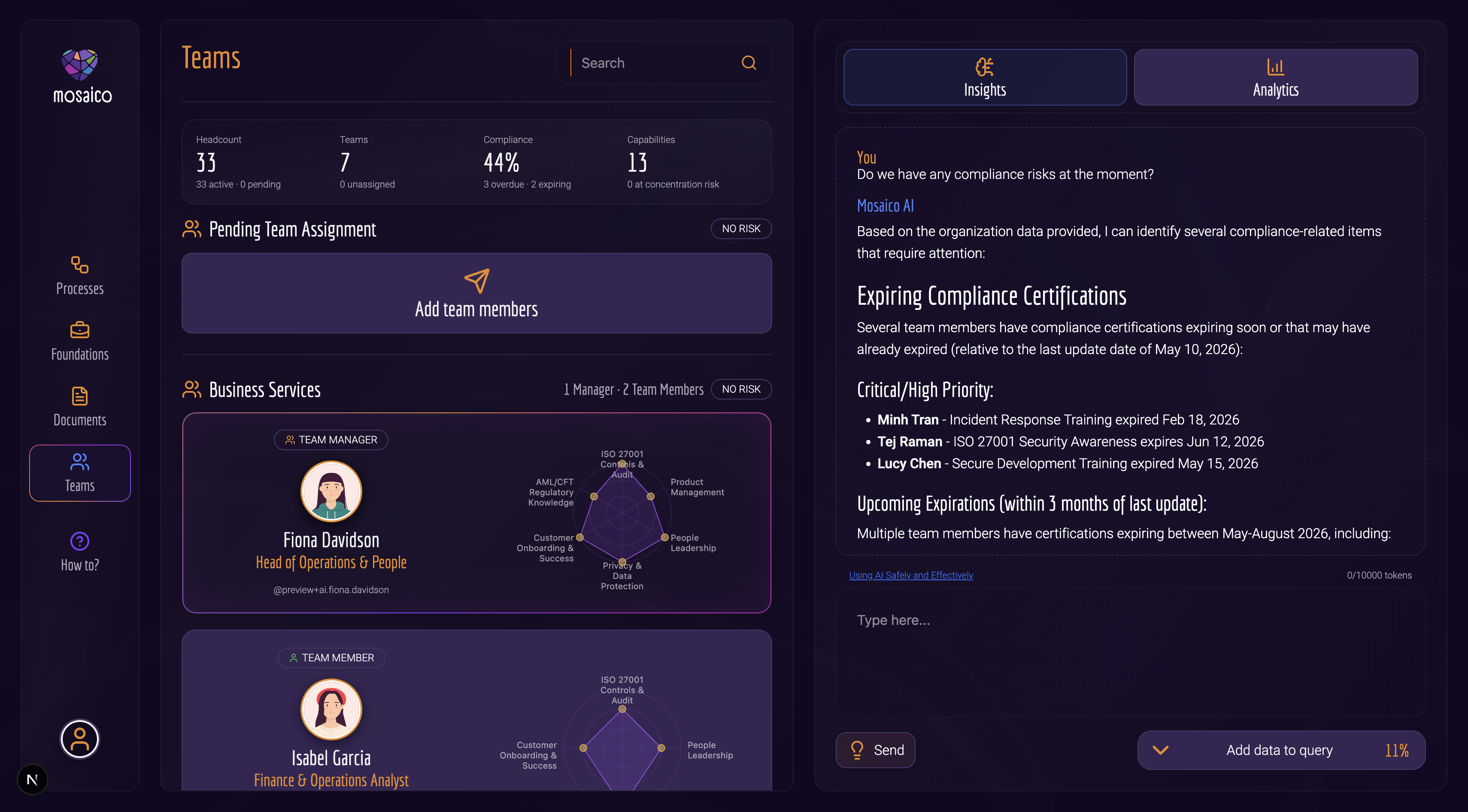

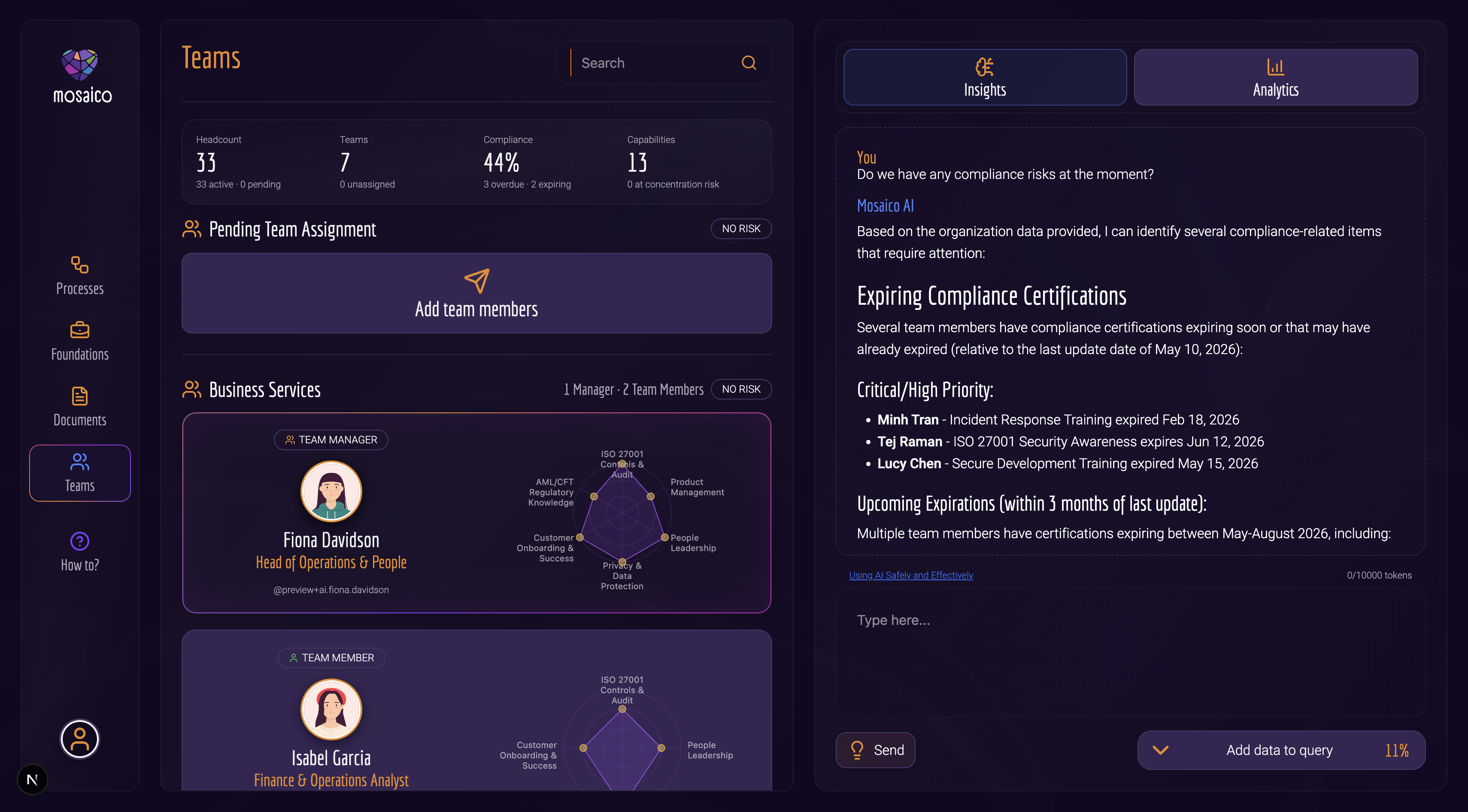

RAG over live data — reports as conversations, not dashboards

All process, progress, position, capability and compliance data is exposed to a RAG layer. Users ask in plain language; the LLM produces the report in seconds. No more building a dashboard for every question, no more digging through pre-built views. Build cost drops, user friction drops, and the answer is exact.

Trade-off rejected

Rejected

Hardcoded modules + pre-built dashboards

The Jemini path: every process is a module, every report is a dashboard, every customer edge case bloats both. 6 months × 10 devs per module. Mosaico refused to repeat it. AI-managed records and RAG-driven reports collapse the cost curve and remove the bloat.

Outcomes

Metric 01

10 mo

build · 1 FTE + 1 part-time

Metric 02

99%

code AI-written · post-pivot

Metric 03

10k / 200

unit tests · E2E scenarios · ~80% cov

Metric 04

months → sec

process install time · vs Jemini era

Qualitative outcome · pilot

Selling since Feb 2026. First pilot customer with ~10 users on the platform. Library now covers 5 industries × 3 business sizes for one-click install.

Qualitative outcome · reliability

Zero crashes, no production bugs. AI scans the codebase for any reported issue in seconds — debugging is no longer a human bottleneck.

What I learned

AI is the ultimate augmentation when you know how to use it — it takes an idea to production code fast and lets you test what works at a fraction of the old cost. The win isn’t speed alone. It’s the cost of being wrong dropping low enough that experimentation becomes the strategy, not the exception.

What’s next

Expand the pilot to more customers, deepen the process library across more industries and sizes, and push the AI agents further into customer-facing surfaces — onboarding, configuration, support — where each interaction further trains the platform.